In the AI Era, Generating Answers Is Easy.

Evaluating Them Correctly Is the Real Skill.

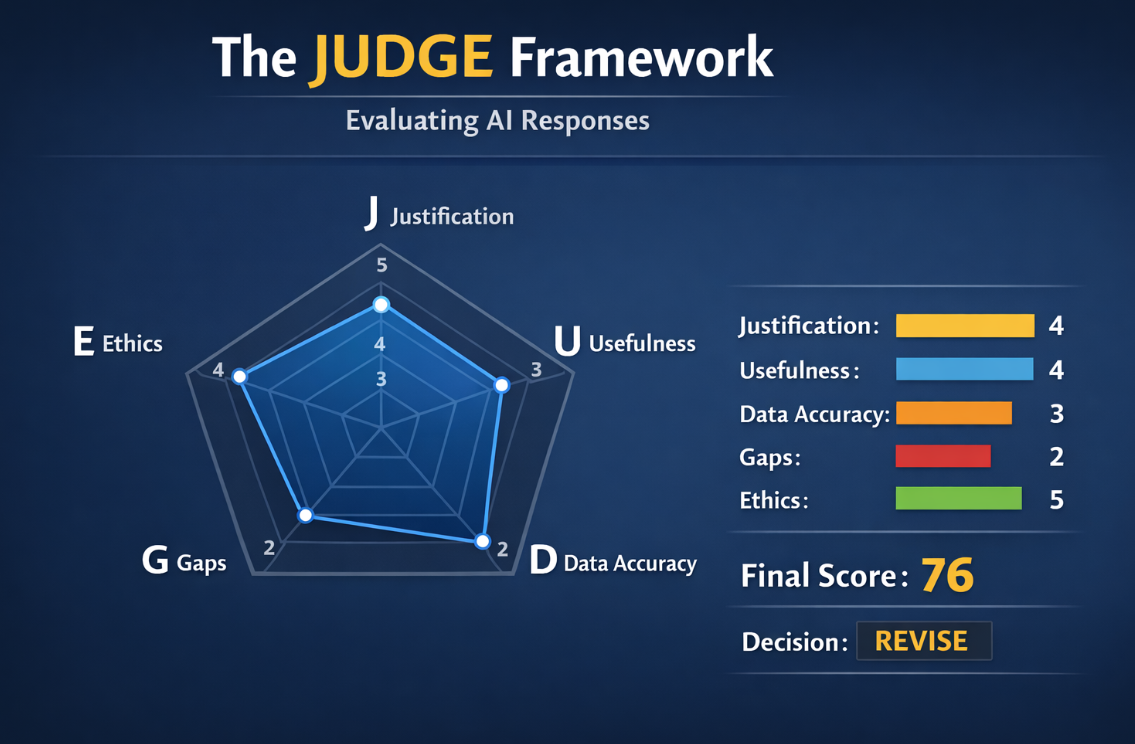

JUDGE: A structured framework for evaluating AI-generated responses before they are used in business, education, research, or decision-making.

Framework Snapshot

Evaluating AI Responses

Five dimensions. One evaluation method.

JUDGE turns vague confidence into structured evaluation. Instead of asking “Does this answer sound good?”, evaluators ask five disciplined questions.

Justification

Is the reasoning logical and well-supported?

Uncertainty

Are assumptions, limits, and unknowns acknowledged?

Data Sources

Are the sources credible, current, and verifiable?

Gaps

Is anything important missing from the response?

Ethics

Is the output responsible, fair, and safe to use?

About the JUDGE Framework

Artificial Intelligence can generate answers quickly — but not all answers are reliable. The JUDGE Framework provides a structured method to evaluate AI-generated responses before they are used in business, research, education, or decision-making.

Real-World Hallucination Example: A Convincing AI Error

Generative AI can produce responses that sound highly credible while still being wrong. For example, an AI may claim that a 2021 study by Stanford economist Nicholas Bloom and the National Bureau of Economic Research showed that remote work increased productivity by 13% across major U.S. companies during the pandemic.

This sounds believable because it includes a real economist, a respected institution, a specific statistic, and professional wording. However, the claim is misleading. The 13% figure came from a specific experiment involving the Chinese company Ctrip, not from major U.S. companies, and the NBER reference is inaccurate in that context.

This is a subtle hallucination: the AI combines real facts into a false conclusion. Examples like this show why structured evaluation is essential, and why the JUDGE Framework is needed to assess AI outputs before they are used in real-world decisions.

Today, AI outputs are often reviewed inconsistently. Different people may interpret the same AI response differently, relying on intuition rather than a clear evaluation method. This can lead to unreliable decisions, overlooked risks, or acceptance of incorrect information.

The JUDGE Framework introduces a consistent evaluation structure so that AI responses can be assessed systematically across teams, organizations, and academic settings. Instead of asking “Does this answer or report sound correct?”, the framework evaluates AI outputs across five dimensions.

- J – Justification – Is the reasoning logical and well-supported?

- U – Uncertainty – Are assumptions and limitations acknowledged?

- D – Data Sources – Are the sources credible and verifiable?

- G – Gaps – Is any important information missing?

- E – Ethics – Is the output responsible and unbiased?

Each dimension is scored, producing an overall evaluation and a final decision: Accept, Revise, or Reject.

These scores can also be visualized using a JUDGE Radar Chart, helping evaluators quickly identify where an AI response is strong or weak.

For Students

- Learn how to evaluate AI outputs instead of trusting them blindly

- Use the same JUDGE spreadsheet across business, engineering, and technical disciplines

- Build a practical certification-ready skill for the AI workplace

For Universities

- Fits into a single lecture, module, workshop, or capstone activity

- Standardized scoring with Accept / Revise / Reject outcomes

- Easy to scale across departments and batches using the same evaluation sheet

For Companies

- Create an AI review checkpoint before outputs reach leadership or customers

- Apply one framework across strategy, marketing, legal, analytics, and operations

- Support AI governance with structured human evaluation

Certified AI Response Analyst

A practical certification built around real AI outputs, instructor-ready case files, and the JUDGE spreadsheet used to score each response.

Sample Outcomes

Strong logic, credible sources, reasonable caution.

Useful direction, but missing key details or evidence.

Unverifiable, risky, or fundamentally unreliable output.

Try the framework with real case files.

Students, faculty, and companies can download the core worksheet and practice scenarios across different batches, disciplines, and decision levels.

JUDGE Evaluation Spreadsheet

The standard scoring worksheet used across all batches and disciplines.

DownloadCase File 1 — Accept

A strong AI-generated business recommendation that is acceptable for further planning.

DownloadCase File 2 — Revise

A plausible AI marketing strategy that requires more evidence and analysis before use.

DownloadCase File 3 — Reject

An AI-generated health-tech claim with unverifiable research and major regulatory risk.

DownloadTry Examples

Explore additional JUDGE case examples designed for learning and hands-on evaluation practice.

Explore